- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- How to install SAP Data Intelligence 3.0 on-premis...

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

former_member25

Active Participant

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

04-13-2020

11:44 PM

SAP Data Intelligence 3.0 features a completely redesigned installation and deployment method. Those that previously worked with SAP Data Hub 2.x will see that we've removed the traditional bash and python scripts that were used to perform the installations. In fact, you will not even be able to download SAP Data Intelligence from the Service Marketplace and instead you will be deploying a containerized installer in the target Kubernetes cluster. The new installer is responsible for the mirroring of docker images and the deployment of Kubernetes resources from within the Kubernetes cluster.

Below is a high-level summary of the new changes with regards to installation:

Prerequisites:

For a complete list of prerequisites please refer to the official SAP Data Intelligence 3.0 Installation Guide

Step 1: Download the SLC Bridge tool

In this guide it is assumed you are using a Linux machine, but commands in Windows or MacOS should be identical.

To deploy the installer into Kubernetes you will need the SLC Bridge (slcb) binary tool which can be downloaded from the SAP Service Marketplace here. Upload this file into your jumpbox.

If you download the tool for linux the filename will misleadingly also have a Windows .EXE extension. Regardless of the operating system you are using, to make it easier to run commands I recommend renaming the SLCB01_XX_.EXE file to slcb and placing in the /usr/bin directory. You may also need to make it executable using chmod.

Step 2: Initialize the SLC Bridge pod in Kubernetes

If you haven't done so already ensure that your kubectl tool is configured to communicate with your Kubernetes cluster. A simple way to check is to look up the nodes of your cluster.

From your workstation execute the following command:

You will be prompted a series of questions. Their descriptions can be found in the official installation guide here. Note: At this time there is no difference between "Typical" and "Expert" deployment mode.

When choosing between exposing the installer web UI using a LoadBalancer or a NodePort service a LoadBalancer is the most common and preferred method.

To monitor or troubleshoot the status of the initialization you can query the Kubernetes namespace where the SLC Bridge was deployed. By default this namespace is set to sap-slcbridge

Finally, in the terminal output of the slcb tool you should see the complete URL to the SLC Bridge Web UI labelled as slcbridgebase-service. Make a note of this IP address and port number. If you chose to deploy SLC Bridge using NodePort service then use the external/public IP address of one of your Kubernetes worker nodes instead (Not the workstation IP address)

Step 3: Generate stack.xml file in SAP Maintenance Planner

Go to the SAP Maintenance Planner at https://apps.support.sap.com/sap/support/mp and click on "Plan a new system" -> "Plan"

On the left menu, select "Container Based" -> "SAP Data Intelligence 3" -> 3.0 (03/2020)

Note on patches: SAP Maintenance Planner will automatically select the latest patch for a given service pack. The latest patch version of a service pack is not displayed in the SAP Maintenance Planner.

You must also specify which stack you want to install. Starting with SAP Data Intelligence 3.0 you can choose from three different installation stacks with an option to skip the deployment of the Vora database and the Diagnostics Framework (Kibana/Grafana).

Each stack option is described in the official SAP Data Intelligence Installation guide.

The Select Files step gives you the opportunity to download the slcb tool directly from the SAP Maintenance Planner but as it was already installed in step 1 we can skip this step.

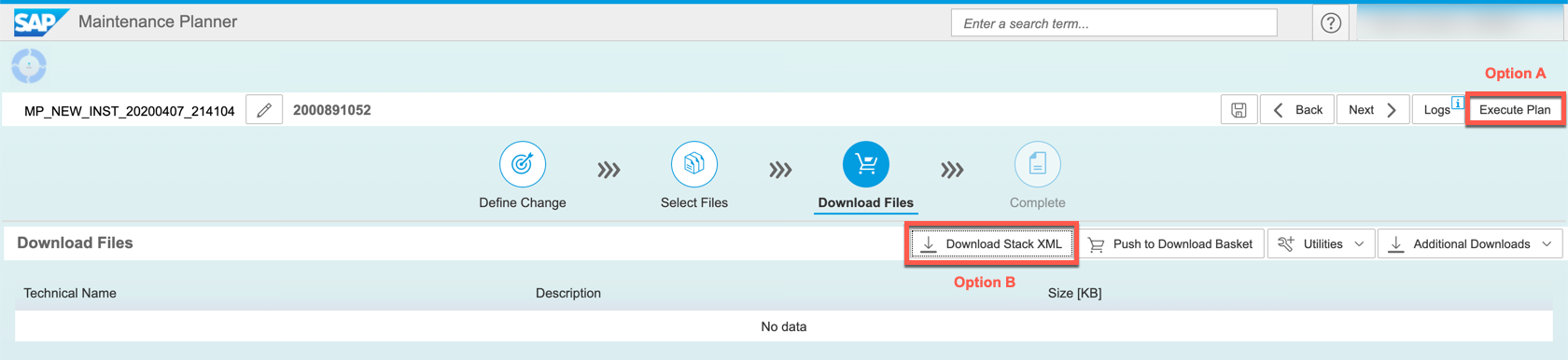

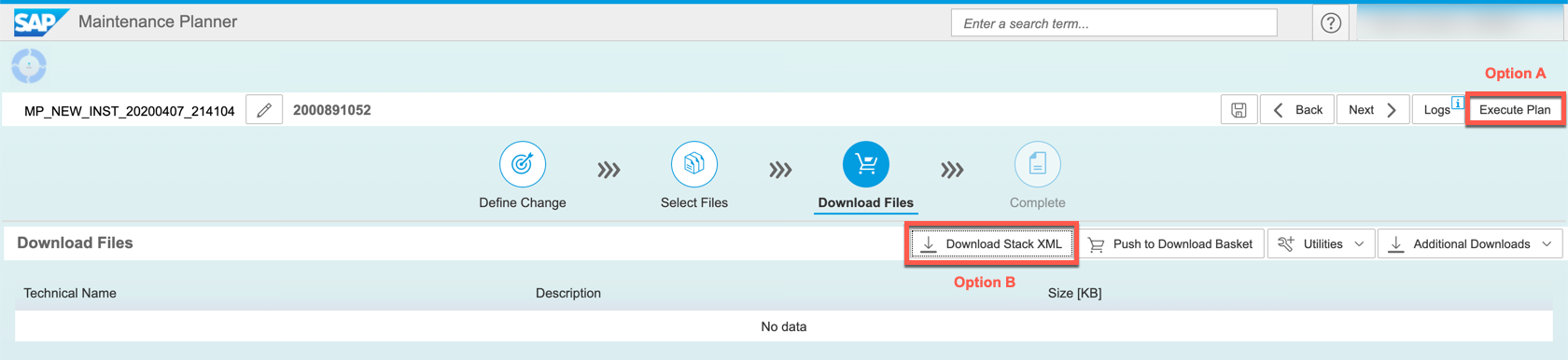

Confirm the empty selection and click next to continue to the Download Files step

If your Kubernetes cluster is accessible from the public internet (e.g. deployed in the public cloud such as AWS) then proceed to option A in this guide.

If your cluster is not accessible from the public internet (e.g. on-premise Kubernetes hidden behind a corporate proxy, on a private cloud network, etc) or you prefer to use the command line to install SAP Data Intelligence then proceed to option B in this guide.

Option A: Deploying MP_stack.xml file to SLC Bridge via Maintenance Planner

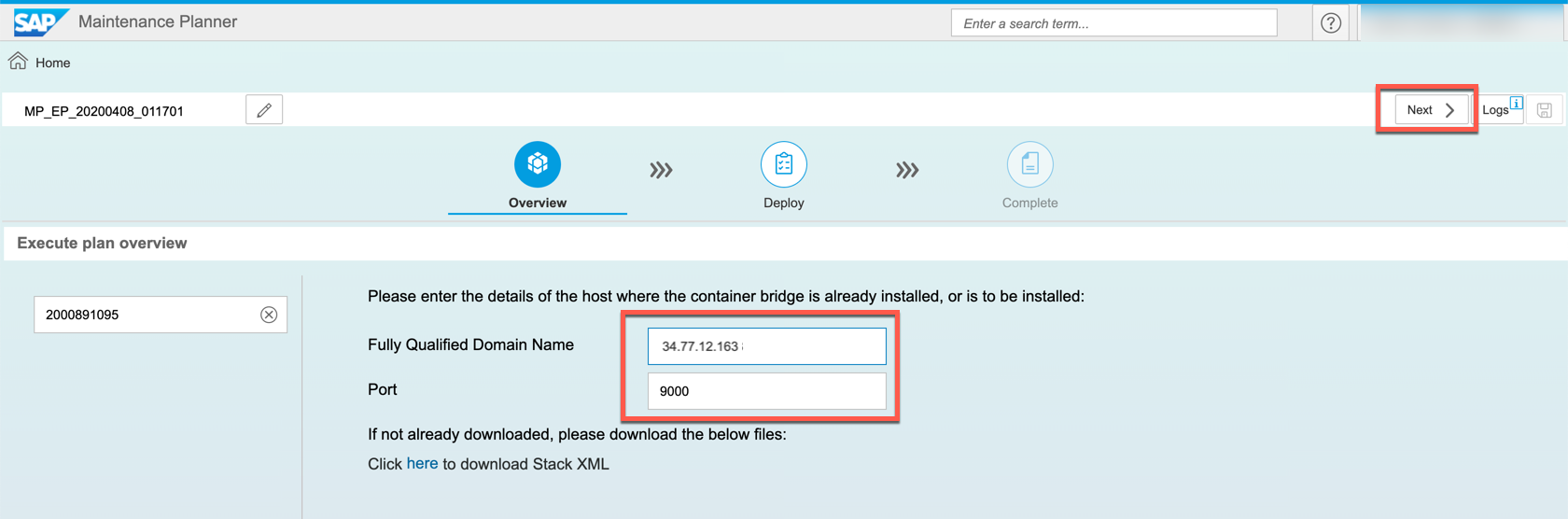

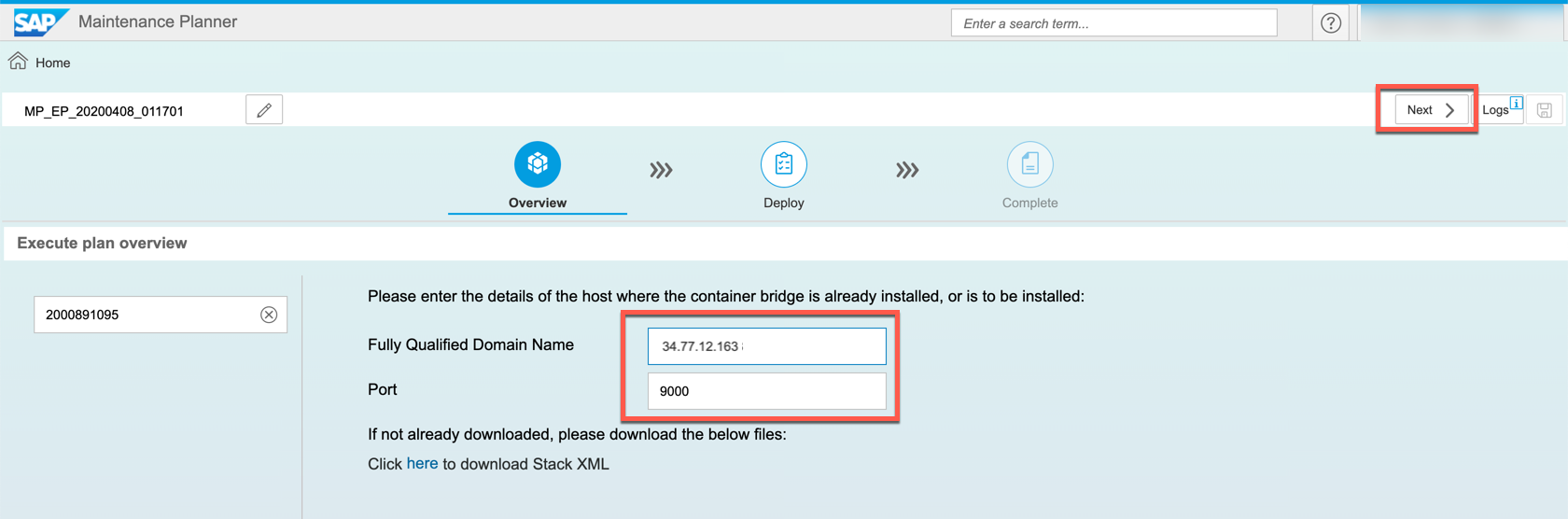

In SAP Maintenance planner click on Execute Plan and enter the IP address and port number of your SLC Bridge LoadBalancer or NodePort Kubernetes service (Obtained in Step 2 of this guide) and then click Next

Before you proceed, it is mandatory that you log on to the SLC Bridge tool in other to obtain an authentication token. Without this the SAP Maintenance Planner will not be able to upload the stack.xml file. Go to https://<SLC IP address>:<Port>/docs/index.html

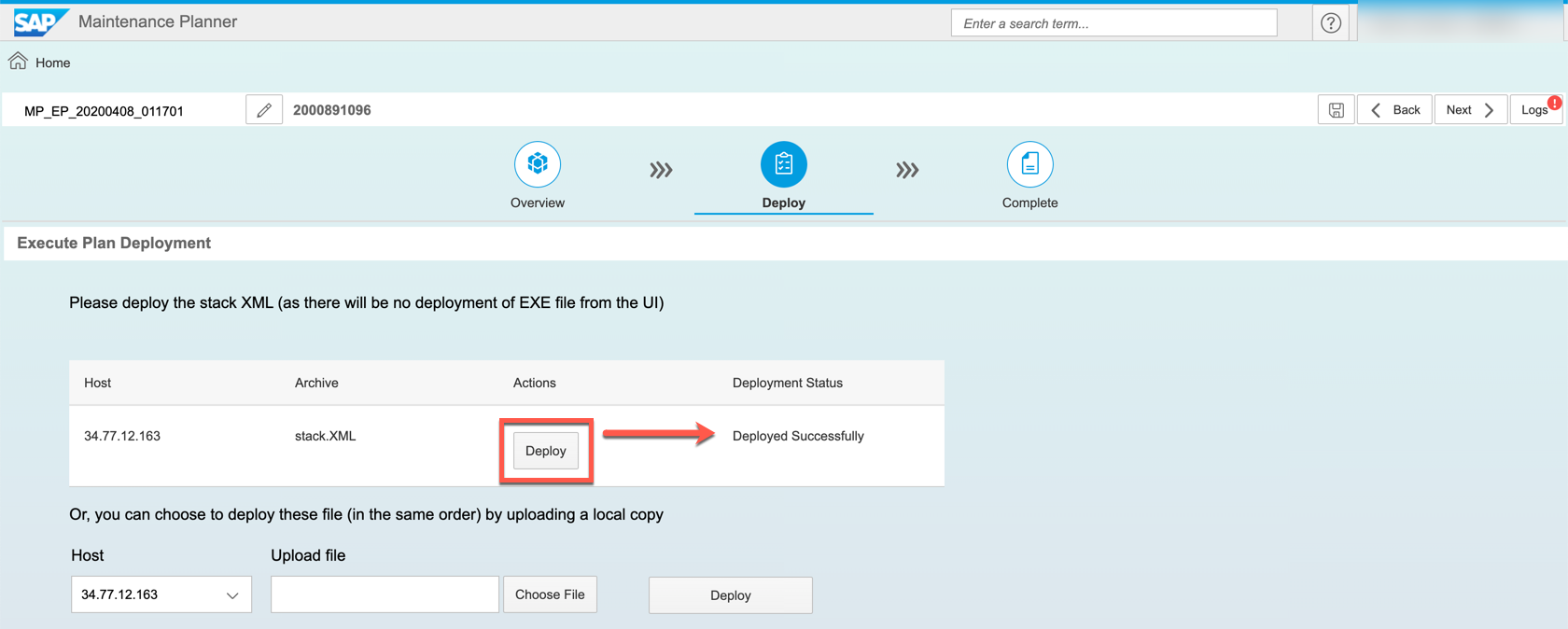

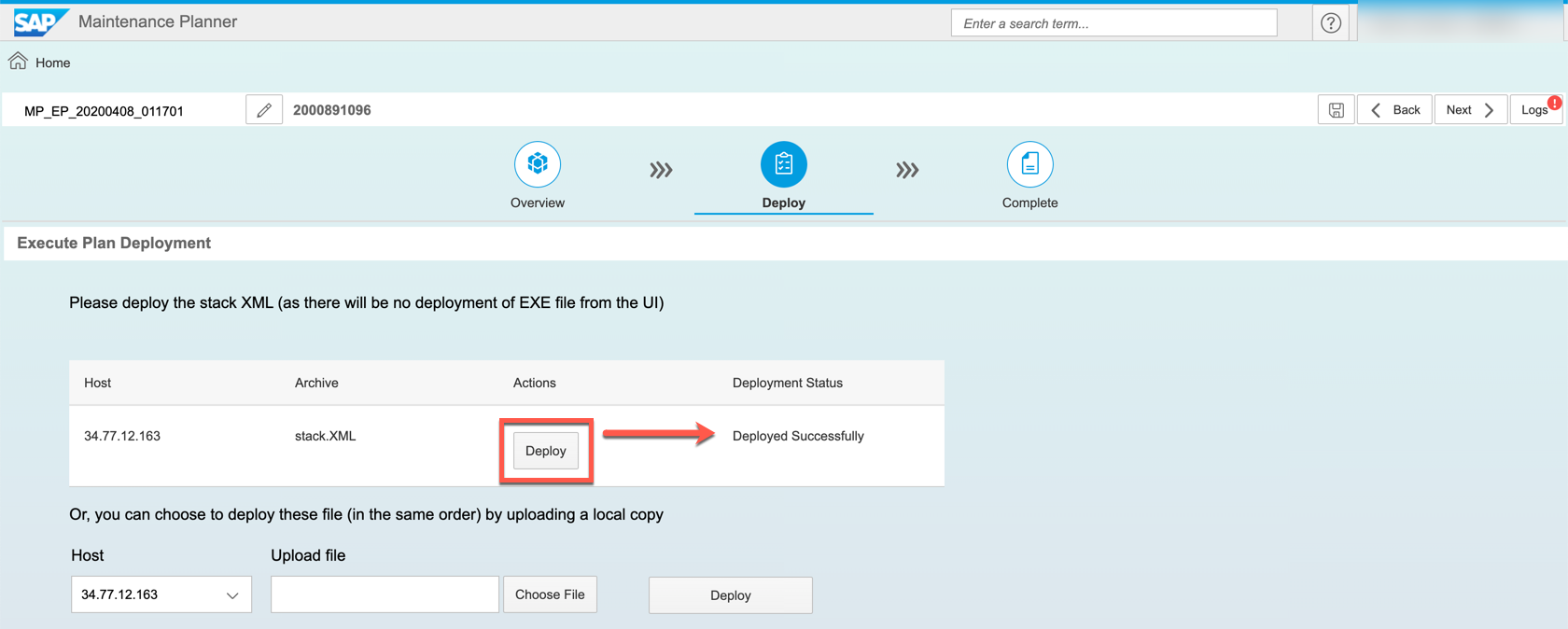

Once logged in, return to the SAP Maintenance Planner and click on the Deploy button to upload the MP_stack.xml file to your SLC Bridge in Kubernetes. There is no progress bar so you may not see a success message right away. After a success message is seen, click Next.

Note: If you do not see a success message then it may indicate the SAP Maintenance Planner is unable to reach your SLC Bridge pod, if so check your firewall/security group rules to ensure the incoming connection is not blocked.

The deployment of the MP_stack.xml file is complete and you can proceed to the SAP Data Intelligence installer web UI. In the next step of the Maintenance Planner a link to the Web UI is provided, but you can also go to http://<IP-address>:<port>/docs/index.html

Proceed to step 4.

Option B: Deploying the MP_stack.xml file to SLC Bridge via slcb command line

Since the SAP Maintenance Planner cannot communicate with Kubernetes clusters hidden behind firewalls or proxy servers you will have the option of uploading the Stack XML file via command line.

Download the MP_stack.xml file from the SAP Maintenance Planner to your workstation

On your workstation execute the command

You will be prompted for the complete SLC Bridge URL and the login credentials that were defined in step 2 of this guide. After this step the xml file will have been uploaded to the SLC Bridge in Kubernetes.

You now have the option to continue the installation via command line or cancel the current execution using CTRL+C and proceed to install via the Web UI at the URL that you received at the end of step 2 https://<IP_ADDRESS>:<PORT_NUMBER>/docs/index.html

Both the Web UI and the command-line are identical terms of the installation process, the only difference is visual.

If you choose to use the command line then select Option 1 (SAP DATA INTELLIGENCE 3 SP Stack 3.0) to continue. You can follow the instructions on Step 4 to complete the command line installation.

Step 4: Selecting a stack and defining installation parameters

The first prompt will ask for which stack to deploy. Each stack option is described in the official SAP Data Intelligence Installation guide.

Before being prompted for installation parameters the installer will first verify (but not copy) that all necessary SAP Data Intelligence images are available in SAP Docker Artifactory. This can take several minutes.

Notes on upgrades:

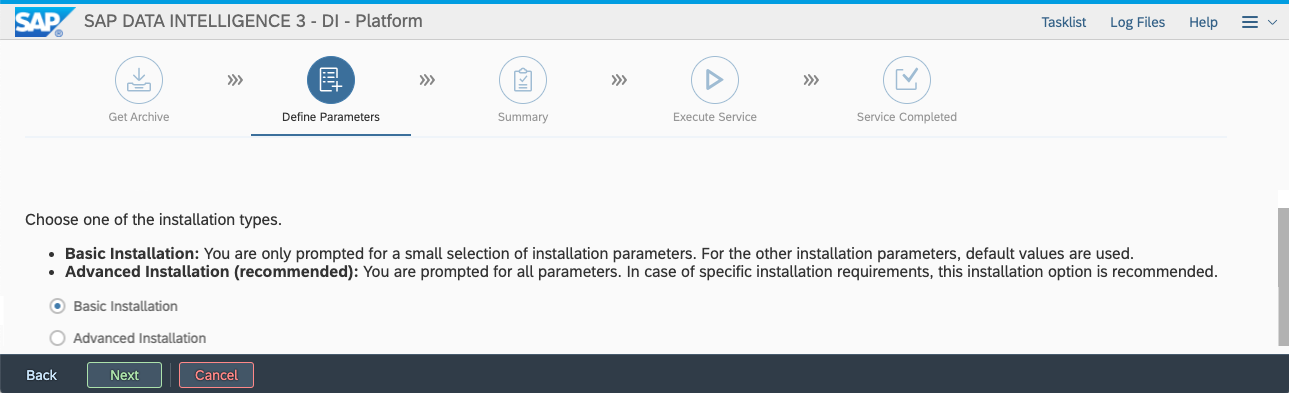

All parameters, including the differences between Basic and Advanced installation modes, are described in the official SAP Data Intelligence Installation guide.

It is highly recommended to deploy SAP Data Intelligence into a separate namespace from the SLC Bridge.

After answering all prompts you will be presented with a summary screen before proceeding with the installation or upgrade.

Step 5: Monitoring and troubleshooting the installation

The installer does not output logs or a detailed progress information during deployment. You can monitor for this by querying for the pod logs using the kubectl command line tool on your workstation

By default the SLC Bridge namespace is set to sap-slcbridge. The name of the pod depends on which stack you have chosen to install, for example if you are deploying the smallest stack, DI Platform, the pod name will be di-platform-product-bridge. The installer logs are stored in the productbridge container. See the example below.

If an installation is taking longer than expected then you may also check for the status of the pods in your target namespace for crashes or other errors, or if a pod is in status pending.

Step 6: Post-installation Configuration

Exposing the front-end

After installation is complete the SAP Data Intelligence front-end is by default not exposed to external traffic. This is typically done by manually deploying an ingress controller and an ingress Kubernetes resource. If you are not familiar with the concept of an ingress then it is highly recommend to read up on it before continuing.

The specific steps for deploying an ingress controller for each cloud vendor are covered in the official installation guide here and for on-premise platforms here.

Configuring container registry for Pipeline Modeler

If you deployed SAP Data Intelligence on Microsoft Azure or if you have a password-protected registry then you will have to provide the docker credentials, without this the Pipeline Modeler will be unable to push docker images to the registry. The exact steps to do this are covered here.

Installing permanent license key

All newly installed clusters come with a 90 day temporary license after this the temporary license will expire and you will no longer be able to log in. So be sure to import the new license right away to avoid unnecessary downtime. The exact steps to import the license are covered here.

How to uninstall SAP Data Intelligence and re-/uninstall the SLC Bridge

To uninstall SAP Data Intelligence use the SLC Bridge Web UI, specify the namespace you wish to work on, and choose the "Uninstall option". Do NOT delete the Kubernetes namespace where SAP Data Intelligence is installed as this leaves behind many other Kubernetes resources that would then have to be deleted manually.

To uninstall the SLC Bridge tool run the command slcb init command on your workstation. After specifying the namespace of where the SLC Bridge pod is running you will be prompted to re/uninstall it.

Below is a high-level summary of the new changes with regards to installation:

- Further integration with SAP Maintenance Planner

- All upgrades and installations will require a stack.xml file generated by the Maintenance Planner to begin deployment

- No dependency on an installer jumpbox

- Installer can be deployed onto Kubernetes directly from a workstation

- Docker image mirroring and installation is performed from within Kubernetes cluster which reduces deployment time

- Reduced cluster resource requirements

- Data Science Platform (DSP), Vora database stack and Diagnostic Framework are all optional and can be skipped to reduce resource strain

Prerequisites:

- Kubernetes cluster v1.14 or v1.15

- A private Docker container registry for mirroring images

- A workstation (Windows, MacOS or Linux) with following components installed:

- kubectl v1.14 or higher

- helm to deploy nginx ingress controller after completing the installation (Optional)

For a complete list of prerequisites please refer to the official SAP Data Intelligence 3.0 Installation Guide

Step 1: Download the SLC Bridge tool

In this guide it is assumed you are using a Linux machine, but commands in Windows or MacOS should be identical.

To deploy the installer into Kubernetes you will need the SLC Bridge (slcb) binary tool which can be downloaded from the SAP Service Marketplace here. Upload this file into your jumpbox.

If you download the tool for linux the filename will misleadingly also have a Windows .EXE extension. Regardless of the operating system you are using, to make it easier to run commands I recommend renaming the SLCB01_XX_.EXE file to slcb and placing in the /usr/bin directory. You may also need to make it executable using chmod.

mv SLCB01_43-70003322.EXE /usr/bin/slcb

chmod +x /usr/bin/slcbStep 2: Initialize the SLC Bridge pod in Kubernetes

If you haven't done so already ensure that your kubectl tool is configured to communicate with your Kubernetes cluster. A simple way to check is to look up the nodes of your cluster.

kubectl get nodesFrom your workstation execute the following command:

slcb initYou will be prompted a series of questions. Their descriptions can be found in the official installation guide here. Note: At this time there is no difference between "Typical" and "Expert" deployment mode.

When choosing between exposing the installer web UI using a LoadBalancer or a NodePort service a LoadBalancer is the most common and preferred method.

To monitor or troubleshoot the status of the initialization you can query the Kubernetes namespace where the SLC Bridge was deployed. By default this namespace is set to sap-slcbridge

kubectl -n sap-slcbridge get allFinally, in the terminal output of the slcb tool you should see the complete URL to the SLC Bridge Web UI labelled as slcbridgebase-service. Make a note of this IP address and port number. If you chose to deploy SLC Bridge using NodePort service then use the external/public IP address of one of your Kubernetes worker nodes instead (Not the workstation IP address)

************************

* Message *

************************

Deployment "slcbridgebase" has 1 available replicas in namespace "sap-slcbridge"

Service "slcbridgebase-service" is listening on "https://34.77.12.163:9000/docs/index.html"

Choose action Next [n/<F1>]: nStep 3: Generate stack.xml file in SAP Maintenance Planner

Go to the SAP Maintenance Planner at https://apps.support.sap.com/sap/support/mp and click on "Plan a new system" -> "Plan"

On the left menu, select "Container Based" -> "SAP Data Intelligence 3" -> 3.0 (03/2020)

Note on patches: SAP Maintenance Planner will automatically select the latest patch for a given service pack. The latest patch version of a service pack is not displayed in the SAP Maintenance Planner.

You must also specify which stack you want to install. Starting with SAP Data Intelligence 3.0 you can choose from three different installation stacks with an option to skip the deployment of the Vora database and the Diagnostics Framework (Kibana/Grafana).

Each stack option is described in the official SAP Data Intelligence Installation guide.

The Select Files step gives you the opportunity to download the slcb tool directly from the SAP Maintenance Planner but as it was already installed in step 1 we can skip this step.

Confirm the empty selection and click next to continue to the Download Files step

If your Kubernetes cluster is accessible from the public internet (e.g. deployed in the public cloud such as AWS) then proceed to option A in this guide.

If your cluster is not accessible from the public internet (e.g. on-premise Kubernetes hidden behind a corporate proxy, on a private cloud network, etc) or you prefer to use the command line to install SAP Data Intelligence then proceed to option B in this guide.

Option A: Deploying MP_stack.xml file to SLC Bridge via Maintenance Planner

In SAP Maintenance planner click on Execute Plan and enter the IP address and port number of your SLC Bridge LoadBalancer or NodePort Kubernetes service (Obtained in Step 2 of this guide) and then click Next

Before you proceed, it is mandatory that you log on to the SLC Bridge tool in other to obtain an authentication token. Without this the SAP Maintenance Planner will not be able to upload the stack.xml file. Go to https://<SLC IP address>:<Port>/docs/index.html

Once logged in, return to the SAP Maintenance Planner and click on the Deploy button to upload the MP_stack.xml file to your SLC Bridge in Kubernetes. There is no progress bar so you may not see a success message right away. After a success message is seen, click Next.

Note: If you do not see a success message then it may indicate the SAP Maintenance Planner is unable to reach your SLC Bridge pod, if so check your firewall/security group rules to ensure the incoming connection is not blocked.

The deployment of the MP_stack.xml file is complete and you can proceed to the SAP Data Intelligence installer web UI. In the next step of the Maintenance Planner a link to the Web UI is provided, but you can also go to http://<IP-address>:<port>/docs/index.html

Proceed to step 4.

Option B: Deploying the MP_stack.xml file to SLC Bridge via slcb command line

Since the SAP Maintenance Planner cannot communicate with Kubernetes clusters hidden behind firewalls or proxy servers you will have the option of uploading the Stack XML file via command line.

Download the MP_stack.xml file from the SAP Maintenance Planner to your workstation

On your workstation execute the command

slcb execute --useStackXML /path/to/MP_stack.xmlYou will be prompted for the complete SLC Bridge URL and the login credentials that were defined in step 2 of this guide. After this step the xml file will have been uploaded to the SLC Bridge in Kubernetes.

You now have the option to continue the installation via command line or cancel the current execution using CTRL+C and proceed to install via the Web UI at the URL that you received at the end of step 2 https://<IP_ADDRESS>:<PORT_NUMBER>/docs/index.html

Both the Web UI and the command-line are identical terms of the installation process, the only difference is visual.

If you choose to use the command line then select Option 1 (SAP DATA INTELLIGENCE 3 SP Stack 3.0) to continue. You can follow the instructions on Step 4 to complete the command line installation.

slcb execute --useStackXML MP_Stack_2000891052_2020047_.xml

'slcb' executable information

Executable: slcb

Build date: 2020-04-03 07:30:22 UTC

Git branch: fa/rel-1.1

Git revision: 55125df84328acf8c97303aae5c978b0466b97b2

Platform: linux

Architecture: amd64

Version: 1.1.44

SLUI version: 2.6.57

Arguments: execute --useStackXML MP_Stack_2000891052_2020047_.xml

Working dir: /Downloads

Schemata: 0.0.44, 1.4.44

Url [<F1>]: https://34.77.12.163:9000/docs/index.html

User: admin

Password: *******

Execute step Download Bridge Images

***************************

* Stack XML File Uploaded *

***************************

Successful Upload of Stack XML File

You uploaded the Stack XML File "stack_2000891052.xml" (ID 2000891052). It contains:

Product Version: SAP DATA INTELLIGENCE 3

Support Package Stack: 3.0 (03/2020)

S-User: S123456789

Product Bridge Image: com.sap.datahub.linuxx86_64/di-forwarding-bridge:3.0.12

SLC Bridge will now proceed to download the Product Bridge Images.

Available Options

1: + SAP DATA INTELLIGENCE 3 SP Stack 3.0 (03/2020) (ID 2000891052)

2: + Planned Software Changes

3: + Maintenance

Select option 1 .. 3 [<F1>]: 1Step 4: Selecting a stack and defining installation parameters

The first prompt will ask for which stack to deploy. Each stack option is described in the official SAP Data Intelligence Installation guide.

Before being prompted for installation parameters the installer will first verify (but not copy) that all necessary SAP Data Intelligence images are available in SAP Docker Artifactory. This can take several minutes.

Notes on upgrades:

- Kubernetes must be upgraded to v1.14 or v1.15 prior to performing the upgrade

- Only upgrades from existing SAP Data Intelligence clusters or SAP Data Hub 2.7 Patch 4 are supported. Older installations of SAP Data Hub must first upgrade to 2.7.4

- The namespace of an existing DI/DH installation must be used when upgrading

- There are known issues with upgrading from SAP Data Hub 2.7. Before upgrading please read SAP Note 2908055 - SAP Data Intelligence 3.0 Upgrade Note

All parameters, including the differences between Basic and Advanced installation modes, are described in the official SAP Data Intelligence Installation guide.

It is highly recommended to deploy SAP Data Intelligence into a separate namespace from the SLC Bridge.

After answering all prompts you will be presented with a summary screen before proceeding with the installation or upgrade.

Step 5: Monitoring and troubleshooting the installation

The installer does not output logs or a detailed progress information during deployment. You can monitor for this by querying for the pod logs using the kubectl command line tool on your workstation

By default the SLC Bridge namespace is set to sap-slcbridge. The name of the pod depends on which stack you have chosen to install, for example if you are deploying the smallest stack, DI Platform, the pod name will be di-platform-product-bridge. The installer logs are stored in the productbridge container. See the example below.

# kubectl -n sap-slcbridge get pods

NAME READY STATUS RESTARTS AGE

di-platform-product-bridge 2/2 Running 0 10m

slcbridgebase-67fc885767-rvp45 2/2 Running 0 2d4h

# kubectl -n sap-slcbridge logs di-platform-product-bridge -c produdctbridge

..

..

2020-04-09T20:04:01.561Z INFO cmd/cmd.go:243 1> DataHub/di3/default [Pending]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> └── Spark/di3/default [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ ├── SecurityOperator/di3/default [Pending] [Start Time: 2020-04-09 20:02:30 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ └── SecurityOperatorDeployment/di3/default [Pending] [Start Time: 2020-04-09 20:03:02 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ └── CertificateResource/di3/tls [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ └── CertificateResource/di3/jwt [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> └── VSystem/di3/default [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ ├── Uaa/di3/default [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ │ ├── Hana/di3/default [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ │ └── SecurityOperator/di3/default [Pending] [Start Time: 2020-04-09 20:02:30 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ │ └── SecurityOperatorDeployment/di3/default [Pending] [Start Time: 2020-04-09 20:03:02 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ │ └── CertificateResource/di3/tls [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ │ └── CertificateResource/di3/jwt [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.562Z INFO cmd/cmd.go:243 1> │ ├── Hana/di3/default [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ └── SecurityOperator/di3/default [Pending] [Start Time: 2020-04-09 20:02:30 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ └── SecurityOperatorDeployment/di3/default [Pending] [Start Time: 2020-04-09 20:03:02 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ └── CertificateResource/di3/tls [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ └── CertificateResource/di3/jwt [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> └── StorageGateway/di3/default [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> └── Auditlog/di3/default [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ ├── Hana/di3/default [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ └── SecurityOperator/di3/default [Pending] [Start Time: 2020-04-09 20:02:30 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ └── SecurityOperatorDeployment/di3/default [Pending] [Start Time: 2020-04-09 20:03:02 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ └── CertificateResource/di3/tls [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> │ └── CertificateResource/di3/jwt [Unknown] [Start Time: 0001-01-01 00:00:00 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> └── SecurityOperator/di3/default [Pending] [Start Time: 2020-04-09 20:02:30 +0000 UTC]

2020-04-09T20:04:01.563Z INFO cmd/cmd.go:243 1> └── SecurityOperatorDeployment/di3/default [Pending] [Start Time: 2020-04-09 20:03:02 +00

If an installation is taking longer than expected then you may also check for the status of the pods in your target namespace for crashes or other errors, or if a pod is in status pending.

kubectl get pods -n di3

NAME READY STATUS RESTARTS AGE

hana-0 1/2 Running 0 112s

spark-master-7f7bf7885c-d2j4r 1/1 Running 0 114s

spark-worker-0 2/2 Running 0 114s

spark-worker-1 2/2 Running 0 106s

vora-security-operator-74d6b755b6-c9thh 1/1 Running 0 5m21sStep 6: Post-installation Configuration

Exposing the front-end

After installation is complete the SAP Data Intelligence front-end is by default not exposed to external traffic. This is typically done by manually deploying an ingress controller and an ingress Kubernetes resource. If you are not familiar with the concept of an ingress then it is highly recommend to read up on it before continuing.

The specific steps for deploying an ingress controller for each cloud vendor are covered in the official installation guide here and for on-premise platforms here.

Configuring container registry for Pipeline Modeler

If you deployed SAP Data Intelligence on Microsoft Azure or if you have a password-protected registry then you will have to provide the docker credentials, without this the Pipeline Modeler will be unable to push docker images to the registry. The exact steps to do this are covered here.

Installing permanent license key

All newly installed clusters come with a 90 day temporary license after this the temporary license will expire and you will no longer be able to log in. So be sure to import the new license right away to avoid unnecessary downtime. The exact steps to import the license are covered here.

How to uninstall SAP Data Intelligence and re-/uninstall the SLC Bridge

To uninstall SAP Data Intelligence use the SLC Bridge Web UI, specify the namespace you wish to work on, and choose the "Uninstall option". Do NOT delete the Kubernetes namespace where SAP Data Intelligence is installed as this leaves behind many other Kubernetes resources that would then have to be deleted manually.

To uninstall the SLC Bridge tool run the command slcb init command on your workstation. After specifying the namespace of where the SLC Bridge pod is running you will be prompted to re/uninstall it.

slcb init

< ... some text truncated for brevity ... >

************************

* SLC Bridge Namespace *

************************

Enter the Kubernetes namespace for the SLC Bridge.

Namespace [<F1>]: slcb

************************

* Deployment Option *

************************

Choose the required deployment option.

1. Reinstall same version

> 2. Uninstall- SAP Managed Tags:

- SAP Data Intelligence

Labels:

54 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,658 -

Business Trends

118 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

76 -

Expert

1 -

Expert Insights

177 -

Expert Insights

361 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

GraphQL

1 -

Kafka

1 -

Life at SAP

780 -

Life at SAP

15 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,574 -

Product Updates

400 -

Replication Flow

1 -

REST API

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,871 -

Technology Updates

495 -

Workload Fluctuations

1

Related Content

- Our Way into the Clean Core in Technology Blogs by Members

- 入門!SAP Analytics Cloud for planning 機能紹介シリーズ - ユーザ管理/権限管理 in Technology Blogs by SAP

- Develop with Joule in SAP Build Code in Technology Blogs by SAP

- SAP Analytics Business Intelligence Statement of Direction – May 2024 update in Technology Blogs by SAP

- RingFencing & DeCoupling S/4HANA with Enterprise Blockchain and SAP BTP - Ultimate Cyber Security 🚀 in Technology Blogs by Members

Top kudoed authors

| User | Count |

|---|---|

| 13 | |

| 7 | |

| 7 | |

| 7 | |

| 7 | |

| 6 | |

| 6 | |

| 6 | |

| 6 | |

| 5 |